By Ed Kanegsberg, and Barbara Kanegsberg, BFK Solutions, with Dr. Darren Williams and Tanner Volek, SHSU

Anyone who has mixed paint, made a cocktail, or baked cookies realizes that mixtures can have very different characteristics than any of their components. In critical and precision product cleaning, mixtures are powerful tools to improve cleaning effectiveness in aqueous and solvent-based processes. We’ll begin with solvency. Let’s say you must remove an adherent soil from your product and have tried out a number of solvents without success. Don’t give up. If neither solvent A nor solvent B work, a mixture of the two could prove highly effective.

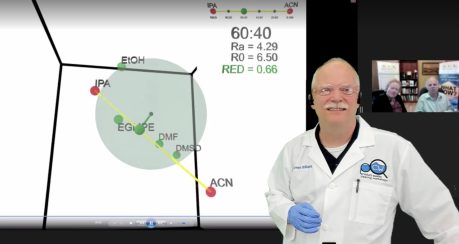

At the Product Quality Cleaning Workshop (PQCW21), Professor Darren Williams and Tanner Volek demonstrated that mixtures of two solvents can be far more powerful than either alone. Read on to see the demonstrations. Read on to learn more.

Important: We’re providing demonstrations of how solvent blends can provide more effective cleaning. Please, oh please, do not experiment by randomly blending solvents (or cleaning agents) on your own. Chemistry can be far more exciting (and hazardous) than you bargain for. (Ref 1)

Permanent Marker

For the demonstration, frosted glass microscope slides were “contaminated” by using a black permanent marker. While the first inclination might be that black permanent marker is not a typical contaminant in manufacturing, it’s actually useful to demonstrate how mixtures can be helpful in critical cleaning. The marker contains carbon black (particles) and a polymer binder; and most manufacturing processes deal with mixed soils. When the marker dries, it is an excellent test soil because it mimics baked-on sooty varnished oils.

Check out Figures 1 – 3 to see how isopropyl alcohol (IPA) nor acetonitrile (ACN) alone work to dissolve the binder but a 60%-40% blend of the two solvents does dissolve it.

Figure 1. Ineffective cleaning with IPA

Figure 2. Slight solvency with ACN

Figure 3. Effective cleaning with 60% IPA-40% ACN mixture

Hansen Parameters

How this works can be explained by looking at the Hansen Solubility Parameters (HSP). HSP quantify the strengths of the three chemical forces that cause molecules to be attracted to one another and coalesce as a liquid or solid. These three forces are Polar (P), non-polar or Dispersive (D), and Hydrogen Bonding (H). Each solvent can be represented as a point in a 3-dimensional space with P, 2D, and H as coordinates as shown in Fig 4. Likewise, a contaminant (soil) adhering to a surface can be characterized by a sphere in this space. The closer the solvent coordinates are to the center of the sphere, the more effective that solvent is in solubilizing the contaminant.

Figure 4 a 3D plot of HSPs; Green points are inside solubility sphere; red points are outside

Figure 4 a 3D plot of HSPs; Green points are inside solubility sphere; red points are outside

As shown in Figure 5, a screenshot from the PQCW session, both IPA and ACN lie outside the solubility sphere of the marker binder so neither are very effective at removing the binder. However, the line between them passes through the sphere; the 60-40 mix lies inside the sphere so this mixture works.

Figure 5. IPA, ACN, and a 60-40 blend

A painless journey to the land of thermodynamics

How the HSP of a solvent or solvent mixture can determine whether or not it is effective to dissolve the soil can be explained using a thermodynamics measure of Gibbs Free Energy. Thermodynamics was not my favorite college subject; it may not have been yours either. Here’s a relatively painless explanation.

“Nature is inherently lazy.” Any system will try to get to a lower energy state, like for instance, rolling down a hill, or getting cooler by transferring heat to a cooler body. Molecules stick to one another if the energy of the stuck molecules is lower than free ones. How does that relate to HSP? The radius of the solubility sphere demarks the boundary between positive and negative Gibbs Free Energy, G. Solvents or solvent blends with HSP outside the sphere have G greater than zero and require additional energy to dissociate the soil from the surface; those inside the sphere have negative G and give up energy, i.e. they go to a lower energy state, when the soil molecules dissolve and let go from the surface. Fig 6 is a GIF, developed by Tanner, showing when the blend of IPA and ACN lies outside the sphere (red) and when it is inside (green).

Materials Compatibility

Ancient chemistry humor: Everyone would like a universal solvent, but how would you store it? Just as there is a solubility sphere for the soil, there is solubility sphere for the material of construction. Each individual chemical may not be in the solubility sphere for materials of construction, but blends may be! Consider the potential for undesirable solvency with the part being cleaned or the process equipment, including seals. Polymeric materials can be especially problematic.

Don’t mix solvents on your own!

Blending solvents is far more complex – and fraught with greater hazards – than mixing paints or making cookies. We’re showing you blends to demonstrate solvency, but don’t just order a bunch of chemicals online and start mixing them. Don’t just start mixing chemicals you have in-house, even if you’ve used them for years.

There are good reasons to avoid blending chemicals. These cautionary notes apply to organic solvents and to aqueous formulations.

Heat may be generated when chemicals are mixed. Exothermic – highly exothermic, even incendiary events – events may occur. In the non-solvent-world, perhaps you recall the proviso not to mix acids with bases. Technicians (and university students) are injured every year for improperly diluting concentrated acids, which leads to Dr. Williams’ favorite quote (in a Boston accent), “do as you oughta, add acid to watah”. Doing the opposite causes boiling water to spray concentrated acid everywhere.

There can be unexpected reactivity. Explosions and large plumes of smoke are not desirable. Even if the fire department doesn’t show up, proceed cautiously. We’ve seen instances where manufacturing engineers combined cleaning agents based on dubious advice, only to find themselves exposed to choking fumes.

There can be unforeseen toxicity issues, including both immediate and long-term inhalation issues. Blends may be more readily absorbed through the skin. It can be a mistake, perhaps a fatal one, to assume that if Material A has a favorable toxicity profile and Material B has a favorable toxicity profile, that a mixture of A and B will also have a favorable profile and be safe to use.

Successful chemists (chemists who survive to old age in a relatively intact state) work cautiously in labs and use appropriate protective equipment, engineering controls, and exhaust hoods.

Don’t go off on your own! Coordinate with your safety/environmental group, and get advice before trying something new.

More about mixtures

The world of cleaning, be it aqueous, semi-aqueous, co-solvent, or solvent cleaning depends on understanding how mixtures work and on using them safely. We’ll tell you more in upcoming issues of Clean Source.

(Ref 1)

https://www.poison.org/poison-statistics-national